Winning the HackGPT 2.0 Hackathon: 2nd Runner-up Position 🧑🏻💻

05 Jun, 2024 • 6 min read

How I built a keystroke serialization engine in 24 hours and won the 2nd runner-up position in the HackGPT 2.0 hackathon

Listen

Playback speed

⏱️ 24 hours of non-stop building. ☕ 12 cups of coffee. 🃏 2 hours of poker (someone had to lose their dignity 😂). And somehow, in between all of that, we shipped a working AI-powered code quality platform that might actually change how developer assessments work.

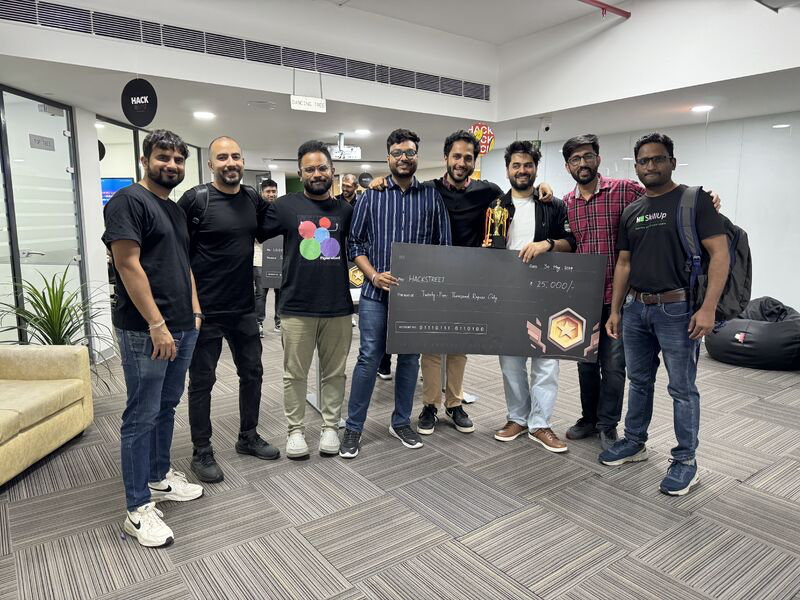

This is the story of HackGPT 2.0, HackerRank's internal 24-hour hackathon during our May P&E Offsite. Our team of 8 engineers reached 2nd runner-up position, but the real win was proving something the product team had been theorizing about for months: AI-powered holistic code review isn't just feasible, it's practical at scale.

🎯 The Problem: Scoring Developers on a Single Axis

Here's the reality of how most coding assessments work today: a candidate writes code, the platform runs testcases, and the output is a single number. Pass 7 out of 10 testcases? That's your score.

But anyone who's done a real code review knows that the score tells you almost nothing about the developer. Two candidates can both score 70%, but one of them wrote clean, well-structured code with thoughtful edge case handling, while the other brute-forced a solution with global variables everywhere and got lucky on the test cases.

The current model measures two things: correctness and partial performance. Everything else (code style, maintainability, security patterns, debugging skills, how the candidate actually approached the problem) is invisible.

This has real consequences. At enterprise scale, one of our customers was manually reviewing 74,000 candidates per year to compensate for what automated scoring couldn't capture. That's over 12,000 hours of engineering time spent on manual code review, every single year.

We wanted to build something that could see what the score can't.

🗺️ Designing a Holistic Skill Profile

Before writing any code, we spent the first couple of hours whiteboarding what a "complete" developer evaluation would actually look like. We landed on five dimensions:

✅ Solution: Does the code work? Is it performant? This is the baseline that existing systems already cover.

🧹 Code Quality: Is it readable? Is it maintainable? Would you want to inherit this codebase?

🛡️ Reliability: Does the candidate think about security, scalability, exception handling, and technical design? These are the signals that separate a junior developer from a senior one.

♿ User Experience: Does the code consider accessibility? This is especially relevant for frontend assessments.

🧠 Logical Progression: This is the one that got us most excited. Instead of just reviewing the final submission, what if we could understand how the candidate got there? Their debugging patterns, their persistence when stuck, the moments where they pivoted their approach.

That last dimension is what made our project different from "just another AI code reviewer." We weren't just analyzing code. We were analyzing the process of writing code.

🏗️ The Architecture (Plus 12 Cups of Coffee)

Hour 3. Whiteboard phase over. Coffee cup #3. Time to build.

We designed the system as a standalone platform service, intentionally decoupled from any single product UI so it could eventually plug into screening, interviews, and skill assessments across the board.

The data flow looks like this:

⌨️ Keystroke ingestion:

Assessment Frontend→Attempt Processing Worker→Candidate DB

🔬 Code quality analysis:

Candidate DB→Code Quality Platform Service→Core API

🤖 AI review engine:

Code Review Rubric→Next.js App↔AI Platform (LLM)

The Code Quality Platform Service is the central piece. It receives attempt data (including raw keystroke streams), runs a combination of static analysis and LLM-powered review against a configurable rubric, and pushes structured results back to the API layer for rendering.

The Next.js app handles the review orchestration, consuming rubric definitions and coordinating with the AI platform to produce categorized, line-level code comments.

And then there was the part I spent most of my 24 hours on.

⏪ My Contribution: Turning Keystrokes into a Time Machine

Here's the thing about keystroke data: it's raw. Really raw. A stream of individual key events with timestamps. Insert character. Delete character. Cursor move. Paste. Undo. Thousands of events per coding session.

My job was to turn that raw stream into something an AI reviewer could actually reason about.

I built a keystroke serialization engine that reconstructs snapshots of the candidate's code at configurable intervals. Instead of just handing the LLM the final submission and saying "review this," the engine generates a sequence of code states, a snapshot every n keystrokes, that captures the candidate's code at different points in their journey.

Think of it like git commits, but automatic and granular 📸. Snapshot at keystroke 50. Snapshot at keystroke 150. Snapshot at keystroke 300. Each one is a frozen moment of the candidate's progress.

This changes what the AI reviewer can see. It's not just what the candidate wrote, it's how they got there 🕵️.

Did they start with a brute-force approach and refactor? Did they handle the happy path first and then circle back for edge cases? Did they get stuck on a syntax error for 10 minutes or catch it in 30 seconds? Did they delete half their code and restart with a better approach, or did they keep patching a broken foundation?

The configurable gap parameter is where the real tradeoff lives: you tune snapshot frequency based on question complexity. For a quick algorithm question, every 30 keystrokes gives enough resolution. For a longer system design problem, every 100.

Production tradeoff

More granular snapshots = more LLM calls, higher latency, and higher inference cost. The cost-to-resolution curve is a real production problem this approach needs to address before it can scale.

On top of the serialization engine, I built the Next.js frontend that surfaces all of this: the code playback UI, the inline AI review comments, and the attempt timeline that shows the candidate's phases visually.

By hour 18, keystroke-to-snapshot serialization was working end to end. By hour 22, it was integrated with the AI review pipeline and rendering in the UI. The last two hours were pure polish and demo prep (and coffee cups #11 and #12).

📊 What the AI Reviewer Actually Outputs

The review engine produces structured, line-level comments. Not vague "this could be better" feedback, but specific, categorized, severity-tagged observations:

json

{

"severity": "High",

"category": "Code Style",

"lines": "5-6",

"comment": "Extensive use of global variables. Leads to unexpected side effects and complicates debugging."

}These comments overlay directly on the code playback, so a reviewer sees the AI's observations at the exact lines where issues appear.

Beyond line-level feedback, the system generates an Attempt Summary, a natural-language overview designed for non-technical hiring stakeholders. It includes a composite score, a phase timeline (initial setup → debugging → edge-case handling → optimization), and structured highlights and lowlights.

Key insight

Defined evaluation criteria fed as structured prompts is what makes LLM code review reliable enough to surface to hiring teams. Without the rubric layer, you get opinions. With it, you get a repeatable process.

🌙 24 Hours of In-Office Hackathon Culture

I want to talk about the vibe for a second, because it matters.

There's something different about building alongside your team in a room at 3 AM with empty coffee cups and a deadline. The brainstorming is faster. The decisions are bolder. Nobody's overthinking a PR review. You're just shipping. The energy of an in-office hackathon compresses months of "we should build this someday" into one intense, focused burst.

The first hour was chaos, eight people talking over each other about what to build. Then someone pulled up a whiteboard and it clicked. The next 22 hours were a mix of deep focus sprints, quick syncs standing around someone's laptop, and exactly the right amount of distraction (the poker game was competitive, I'll leave it at that 🃏).

This is the stuff that builds team trust. You learn more about how someone thinks and works in 24 hours of hackathon pressure than in months of regular sprint work.

🚀 From Hackathon to Product

By demo time, we had two things working end to end:

- A fully operational Code Quality Platform Service: keystroke ingestion, snapshot serialization, AI-powered multi-dimensional review, and structured output via API.

- A working frontend integration: inline code review comments, skill dimension indicators, attempt timeline, and natural-language summary, all rendered in the assessment UI.

We placed 2nd runner-up 🥉 out of all teams at HackGPT 2.0. More importantly, the prototype demonstrated something the product team had been theorizing about for months: that AI-powered holistic code review is not just feasible, but practical at scale.

Our CEO later called it out publicly 📢:

That validation felt great 🙌. But what I keep thinking about is the second-order implication: if keystroke data becomes a first-class evaluation signal, the way assessments get designed changes. Question complexity, time windows, the balance between free-form coding and structured problems, all of it needs rethinking. The technology isn't the hard part. Rethinking the assessment design around it is 🤝.

💡 What I Learned

-

⛏️ Keystroke data is an underrated goldmine. Most platforms throw it away or use it only for proctoring. Treating it as a first-class data source for skill assessment opens up an entirely new evaluation dimension.

-

🤖 LLMs are good at code review, with guardrails. You can't just dump code into an LLM and expect consistent, structured output. The rubric-driven approach, where you define evaluation criteria separately and feed them as structured prompts, is what makes the output reliable enough to surface to hiring teams.

-

⚡ Hackathons compress learning. I went deeper on keystroke event processing, snapshot reconstruction, and LLM orchestration in 24 hours than I would have in weeks of regular work. Constraints breed focus.

Eight people with different expertise areas, 24 hours, one shared vision. That's how real systems get built ✨